Hadoop基础教程(六)

HDFS集群配置与启动

HDFS集群配置与启动

1.集群节点的配置

| 主机 | IP | 角色 |

|---|---|---|

| master | 192.168.3.77 | namenode/datanode |

| slave1 | 192.168.3.78 | datanode |

| slave2 | 192.168.3.79 | secondnamenode/datanode |

2.测试节点主机名称和网络

1)测试三个节点的主机名字是否正确?

#查看master主机名字

[root@master sbin]# hostname

master

#查看slave1主机名字

[root@slave1 ~]# hostname

slave1

#查看slave2主机名字

[root@slave2 ~]# hostname

slave2

2)测试master主机能否ping通子节点。

[root@master sbin]# ping master

[root@master sbin]# ping slave1

[root@master sbin]# ping slave1

3.安装hadoop-2.9.2

给三个节点安装相同版本的hadoop-2.9.2,要保证HDFS集群能正常启动,至少要满足以下两点:

- 每个节点的etc/hosts,和$HADOOP_HOME/etc/hadoop下的core-site.xml,hdfs-site.xml,salves 这些配置文件配置完全相同。

- master主机能够免密登录slave1和slave2。

各个配置文件配置如下:

etc/hosts文件

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.3.77 master

192.168.3.78 slave1

192.168.3.79 slave2

core-site.xml

<configuration>

<!-- 制定HDFS的老大(NameNode)的地址 -->

<property>

<name>fs.defaultFS</name>

<!--master为linux主机名字-->

<value>hdfs://master:9000</value>

</property>

<!-- 指定hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop-2.9.2/tmp</value>

</property>

</configuration>

hdfs-site.xml

<configuration>

<!-- 指定HDFS副本的数量(集群下,有多台机,可多份,目前有三台)-->

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!--配置secondnamenode-->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>slave2:50090</value>

</property>

<property>

<name>dfs.namenode.datanode.registration.ip-hostname-check</name>

<value>false</value>

</property>

</configuration>

salves

master

slave1

slave2

4.测试免密登录

在master主机上测试免密登录到slave1和slave2.

[root@master hadoop]# ssh slave1

Last login: Tue May 31 08:52:41 2022 from master

[root@master ~]# ssh slave2

Last login: Tue May 31 08:52:57 2022 from master

如果不能免密登录,在master主机执行以下命令:

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

chmod 600 ~/.ssh/authorized_keys

chmod 700 ~/.ssh

scp ~/.ssh/authorized_keys root@slave1:/root/.ssh/

scp ~/.ssh/authorized_keys root@slave2:/root/.ssh/

5.HDFS集群启动

[root@master sbin]# start-dfs.sh

Starting namenodes on [master]

master: starting namenode, logging to /usr/local/hadoop-2.9.2/logs/hadoop-root-namenode-master.out

slave2: starting datanode, logging to /usr/local/hadoop-2.9.2/logs/hadoop-root-datanode-slave2.out

slave1: starting datanode, logging to /usr/local/hadoop-2.9.2/logs/hadoop-root-datanode-slave1.out

master: starting datanode, logging to /usr/local/hadoop-2.9.2/logs/hadoop-root-datanode-master.out

Starting secondary namenodes [slave2]

slave2: starting secondarynamenode, logging to /usr/local/hadoop-2.9.2/logs/hadoop-root-secondarynamenode-slave2.out

[root@master sbin]# start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /usr/local/hadoop-2.9.2/logs/yarn-root-resourcemanager-master.out

slave2: starting nodemanager, logging to /usr/local/hadoop-2.9.2/logs/yarn-root-nodemanager-slave2.out

slave1: starting nodemanager, logging to /usr/local/hadoop-2.9.2/logs/yarn-root-nodemanager-slave1.out

master: starting nodemanager, logging to /usr/local/hadoop-2.9.2/logs/yarn-root-nodemanager-master.out

在master主机观察进程。

[root@master sbin]# jps

16208 DataNode

17201 Jps

16009 NameNode

16953 ResourceManager

17103 NodeManager

在slave1主机观察进程。

[root@slave1 hadoop]# jps

15712 Jps

14692 DataNode

15387 NodeManager

在slave2主机观察进程。

[root@slave2 hadoop]# jps

15504 NodeManager

15131 SecondaryNameNode

15964 Jps

14782 DataNode

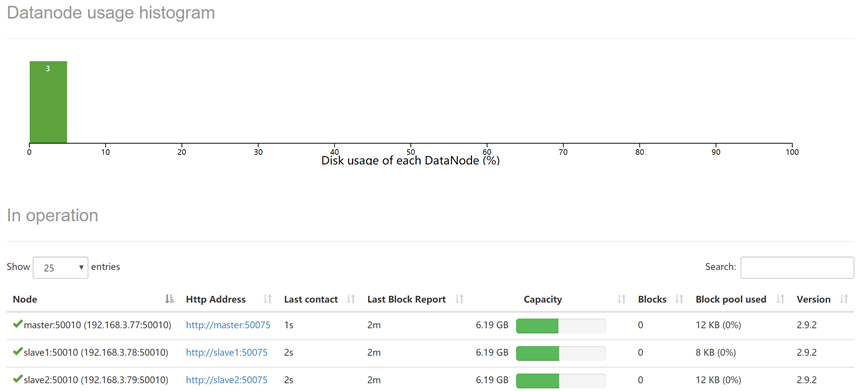

打开浏览器,地址输入:http://192.168.3.77:50070,在DataNodes标签可以发现三个datanodes节点。如下图所示:

关闭DHFS集群,在master主机执行以下命令:

[root@master sbin]# stop-dfs.sh

Stopping namenodes on [master]

master: stopping namenode

master: stopping datanode

slave2: stopping datanode

slave1: stopping datanode

Stopping secondary namenodes [slave2]

slave2: stopping secondarynamenode

[root@master sbin]# stop-yarn.sh

stopping yarn daemons

stopping resourcemanager

master: stopping nodemanager

slave2: stopping nodemanager

slave1: stopping nodemanager

master: nodemanager did not stop gracefully after 5 seconds: killing with kill -9

slave2: nodemanager did not stop gracefully after 5 seconds: killing with kill -9

slave1: nodemanager did not stop gracefully after 5 seconds: killing with kill -9

no proxyserver to stop

6.常见问题解决

hadoop伪集群/集群启动成功但live nodes一直为0。

- 直接把hadoop安装路径下tmp/dfs里面的文件删除(每个节点都要删除)

- 删除nm-local-dir文件夹

- 删除logs文件夹里的所有文件和文件夹(这个好像问题不大)

- 最后重新格式化集群