采集文件内容上传至HDFS

采集文件内容上传至HDFS

我们来看一个案例:对采集到的数据按天按类型分目录存储。

实现步骤如下:

1.原始日志数据

{"id":"14943445328940974601","uid":"840717325115457536","lat":"53.530598","lnt":"-2.5620373","hots":0,"title":"0","status":"1","topicId":"0","end_time":"1494344570","watch_num":0,"share_num":"1","replay_url":null,"replay_num":0,"start_time":"1494344544","timestamp":1494344571,"type":"video_info"}

{"uid":"861848974414839801","nickname":"mick","usign":"","sex":1,"birthday":"","face":"","big_face":"","email":"abc@qq.com","mobile":"","reg_type":"102","last_login_time":"1494344580","reg_time":"1494344580","last_update_time":"1494344580","status":"5","is_verified":"0","verified_info":"","is_seller":"0","level":1,"exp":0,"anchor_level":0,"anchor_exp":0,"os":"android","timestamp":1494344580,"type":"user_info"}

{"send_id":"834688818270961664","good_id":"223","video_id":"14943443045138661356","gold":"10","timestamp":1494344574,"type":"gift_record"}

这份数据中有三种类型的数据,视频信息、用户信息、送礼信息,数据都是json格式的,这些数据还有一个共性就是里面都有一个type字段,type字段的值代表数据类型。

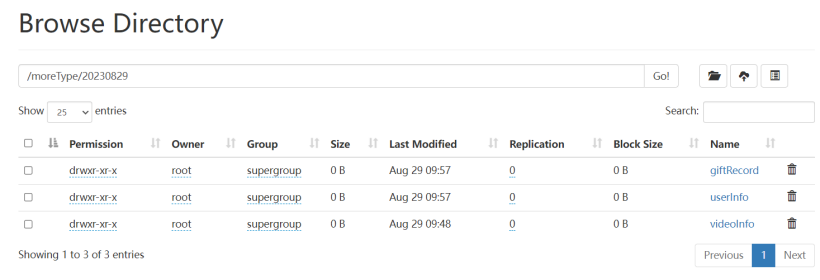

最终在hdfs中需要生成的目录大致是这样的。

2.创建Agent配置文件

在$FLUME_HOME/conf 目录下,新建file-to-hdfs-moreType.conf。

# agent的名称是a1

# 指定source组件、channel组件和Sink组件的名称

a1.sources = r1

a1.channels = c1

a1.sinks = k1

# 配置source组件

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /data/log/moreType.log

# 配置拦截器 [多个拦截器按照顺序依次执行]

a1.sources.r1.interceptors = i1 i2 i3 i4

a1.sources.r1.interceptors.i1.type = search_replace

a1.sources.r1.interceptors.i1.searchPattern = "type":"video_info"

a1.sources.r1.interceptors.i1.replaceString = "type":"videoInfo"

a1.sources.r1.interceptors.i2.type = search_replace

a1.sources.r1.interceptors.i2.searchPattern = "type":"user_info"

a1.sources.r1.interceptors.i2.replaceString = "type":"userInfo"

a1.sources.r1.interceptors.i3.type = search_replace

a1.sources.r1.interceptors.i3.searchPattern = "type":"gift_record"

a1.sources.r1.interceptors.i3.replaceString = "type":"giftRecord"

a1.sources.r1.interceptors.i4.type = regex_extractor

a1.sources.r1.interceptors.i4.regex = "type":"(\\w+)"

a1.sources.r1.interceptors.i4.serializers = s1

a1.sources.r1.interceptors.i4.serializers.s1.name = logType

# 配置channel组件

a1.channels.c1.type = file

a1.channels.c1.checkpointDir = /usr/local/flume/data/moreType/checkpoint

a1.channels.c1.dataDirs = /usr/local/flume/data/moreType/data

# 配置sink组件

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path = hdfs://master:9000/moreType/%Y%m%d/%{logType}

a1.sinks.k1.hdfs.fileType = DataStream

a1.sinks.k1.hdfs.writeFormat = Text

a1.sinks.k1.hdfs.rollInterval = 3600

a1.sinks.k1.hdfs.rollSize = 134217728

a1.sinks.k1.hdfs.rollCount = 0

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#增加文件前缀和后缀

a1.sinks.k1.hdfs.filePrefix = data

a1.sinks.k1.hdfs.fileSuffix = .log

# 把组件连接起来

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

3.创建测试日志数据

把原始数据输入到/data/log/moreType.log 文件中

4.启动Agent测试

bin/flume-ng agent --name a1 --conf conf --conf-file conf/file-to-hdfs-moreType.conf -Dflume.root.logger=INFO,console

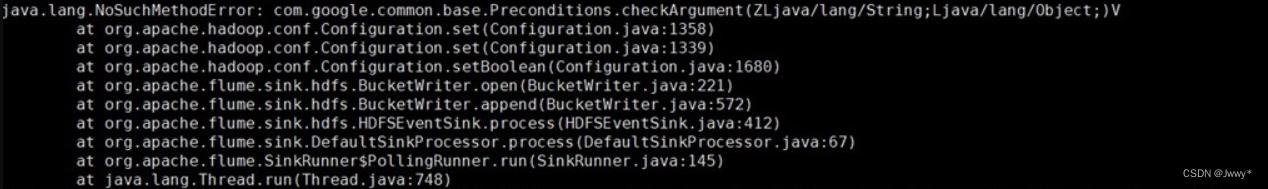

注意:

如果flume查看日志文件发现报错:java.lang.NoSuchMethodError: com.google.common.base.Preconditions.checkArgument(ZLjava/lang/String;Ljava/lang/Object;)V

一般这类问题,不是少jar包,那就是jar包冲突,大概率情况是hadoop的share/hadoop/common/lib目录下的guava包和flume的lib下guava包冲突了,版本不一致就会报错(hadoop为高版本),直接把flume的guava更名备份或者删掉就OK了。

验证结果,在master节点上查看HDFS的日志文件。

[root@master ~]# hdfs dfs -ls -R /moreType/20230829/

drwxr-xr-x - root supergroup 0 2023-08-29 09:57 /moreType/20230829/giftRecord

-rw-r--r-- 3 root supergroup 434 2023-08-29 09:57 /moreType/20230829/giftRecord/data.1693274259593.log.tmp

drwxr-xr-x - root supergroup 0 2023-08-29 09:57 /moreType/20230829/userInfo

-rw-r--r-- 3 root supergroup 413 2023-08-29 09:48 /moreType/20230829/userInfo/data.1693273685022.log

-rw-r--r-- 3 root supergroup 413 2023-08-29 09:57 /moreType/20230829/userInfo/data.1693273685023.log

-rw-r--r-- 3 root supergroup 413 2023-08-29 09:57 /moreType/20230829/userInfo/data.1693273685024.log.tmp

drwxr-xr-x - root supergroup 0 2023-08-29 09:48 /moreType/20230829/videoInfo

-rw-r--r-- 3 root supergroup 297 2023-08-29 09:48 /moreType/20230829/videoInfo/data.1693273683677.log

-rw-r--r-- 3 root supergroup 297 2023-08-29 09:48 /moreType/20230829/videoInfo/data.1693273683678.log.tmp

查看日志文件。

[root@master ~]# hdfs dfs -cat /moreType/20230829/userInfo/data.1693273685024.log.tmp

2023-08-29 10:18:04,932 INFO sasl.SaslDataTransferClient: SASL encryption trust check: localHostTrusted = false, remoteHostTrusted = false

{"uid":"861848974414839801","nickname":"mick","usign":"","sex":1,"birthday":"","face":"","big_face":"","email":"abc@qq.com","mobile":"","reg_type":"102","last_login_time":"1494344580","reg_time":"1494344580","last_update_time":"1494344580","status":"5","is_verified":"0","verified_info":"","is_seller":"0","level":1,"exp":0,"anchor_level":0,"anchor_exp":0,"os":"android","timestamp":1494344580,"type":"userInfo"}