Canal是阿里巴巴开源的一款数据库日志增量解析组件,通过Canal来解析数据库的日志信息,来检测数据库中表结构和数据的变化,从而更新Solr索引库。使用Canal可以做到业务代码完全解耦,API完全解耦,可以做到准实时。

1.实例 Canal实现MySQL与Redis数据同步。实现步骤如下:

1).修改my.ini添加binlog支持

#开启bInlog

log-bin=mysql-bin

#给mysql服务指定一个唯一的ID

server-id=1

#以数据的方式写binlog日志:statement是记录SQL,row是记录数据

binlog-format=ROW

#同步的数据库名

#binlog-do-db=canaldb

#忽略的表

binlog-ignore-db=mysql

#启动mysql时不启动grant-tables授权表

#skip-grant-tables

2).在MySQL中添加canal账号。

create user 'canal'@'%' IDENTIFIED WITH mysql_native_password BY 'canal';

grant SELECT, REPLICATION SLAVE, REPLICATION CLIENT on *.* to 'canal'@'%';

FLUSH PRIVILEGES;

#对于MySQL8以上版本,需要执行以下脚本。

select host,user,plugin from mysql.user ;

ALTER USER 'canal'@'%' IDENTIFIED WITH mysql_native_password BY 'canal';

FLUSH PRIVILEGES;

3).下载canal server.

去canal 官网下载canal server。

https://github.com/alibaba/canal/releases

4).修改canal server相关配置文档。

修改 canal.deployer-1.1.6/conf/example/instance.properties

#################################################

## mysql serverId , v1.0.26+ will autoGen

#canal.instance.mysql.slaveId=0

# enable gtid use true/false

canal.instance.gtidon=false

# position info

canal.instance.master.address=127.0.0.1:3306

canal.instance.master.journal.name=

canal.instance.master.position=

canal.instance.master.timestamp=

canal.instance.master.gtid=

# rds oss binlog

canal.instance.rds.accesskey=

canal.instance.rds.secretkey=

canal.instance.rds.instanceId=

# table meta tsdb info

canal.instance.tsdb.enable=true

#canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb

#canal.instance.tsdb.dbUsername=root

#canal.instance.tsdb.dbPassword=root

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# username/password

canal.instance.dbUsername=canal

canal.instance.dbPassword=canal

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table regex

canal.instance.filter.regex=.*\\..*

# canal.instance.filter.regex=springboot.users

# table black regex

canal.instance.filter.black.regex=mysql\\.slave_.*

# table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch

# table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch

# mq config

canal.mq.topic=example

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,topic2:mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

#canal.mq.enableDynamicQueuePartition=false

#canal.mq.partitionsNum=3

#canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6

#canal.mq.partitionHash=test.table:id^name,.*\\..*

#################################################

canal.instance.parser.parallel=false

修改 canal.deployer-1.1.6/conf/canal.properties

#################################################

######### common argument #############

#################################################

# tcp bind ip

canal.ip = 127.0.0.1

# register ip to zookeeper

canal.register.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

# canal instance user/passwd

# canal.user = canal

# canal.passwd = E3619321C1A937C46A0D8BD1DAC39F93B27D4458

# canal admin config

#canal.admin.manager = 127.0.0.1:8089

canal.admin.port = 11110

canal.admin.user = admin

canal.admin.passwd = 4ACFE3202A5FF5CF467898FC58AAB1D615029441

# admin auto register

#canal.admin.register.auto = true

#canal.admin.register.cluster =

#canal.admin.register.name =

canal.zkServers =

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, rocketMQ, rabbitMQ, pulsarMQ

canal.serverMode = tcp

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

canal.instance.filter.dml.insert = false

canal.instance.filter.dml.update = false

canal.instance.filter.dml.delete = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

# table meta tsdb info

canal.instance.tsdb.enable = true

canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

canal.instance.tsdb.dbUsername = canal

canal.instance.tsdb.dbPassword = canal

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

#################################################

######### destinations #############

#################################################

canal.destinations = example

# conf root dir

canal.conf.dir = ../conf

# auto scan instance dir add/remove and start/stop instance

canal.auto.scan = true

canal.auto.scan.interval = 5

# set this value to 'true' means that when binlog pos not found, skip to latest.

# WARN: pls keep 'false' in production env, or if you know what you want.

canal.auto.reset.latest.pos.mode = false

canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

#canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

canal.instance.global.mode = spring

canal.instance.global.lazy = false

canal.instance.global.manager.address = ${canal.admin.manager}

#canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

canal.instance.global.spring.xml = classpath:spring/file-instance.xml

#canal.instance.global.spring.xml = classpath:spring/default-instance.xml

##################################################

######### MQ Properties #############

##################################################

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

canal.aliyun.uid=

canal.mq.flatMessage = true

canal.mq.canalBatchSize = 50

canal.mq.canalGetTimeout = 100

# Set this value to "cloud", if you want open message trace feature in aliyun.

canal.mq.accessChannel = local

canal.mq.database.hash = true

canal.mq.send.thread.size = 30

canal.mq.build.thread.size = 8

##################################################

######### Kafka #############

##################################################

kafka.bootstrap.servers = 127.0.0.1:9092

kafka.acks = all

kafka.compression.type = none

kafka.batch.size = 16384

kafka.linger.ms = 1

kafka.max.request.size = 1048576

kafka.buffer.memory = 33554432

kafka.max.in.flight.requests.per.connection = 1

kafka.retries = 0

kafka.kerberos.enable = false

kafka.kerberos.krb5.file = "../conf/kerberos/krb5.conf"

kafka.kerberos.jaas.file = "../conf/kerberos/jaas.conf"

##################################################

######### RocketMQ #############

##################################################

rocketmq.producer.group = test

rocketmq.enable.message.trace = false

rocketmq.customized.trace.topic =

rocketmq.namespace =

rocketmq.namesrv.addr = 127.0.0.1:9876

rocketmq.retry.times.when.send.failed = 0

rocketmq.vip.channel.enabled = false

rocketmq.tag =

##################################################

######### RabbitMQ #############

##################################################

rabbitmq.host =

rabbitmq.virtual.host =

rabbitmq.exchange =

rabbitmq.username =

rabbitmq.password =

rabbitmq.deliveryMode =

##################################################

######### Pulsar #############

##################################################

pulsarmq.serverUrl =

pulsarmq.roleToken =

pulsarmq.topicTenantPrefix =

5).canal server 启动测试。

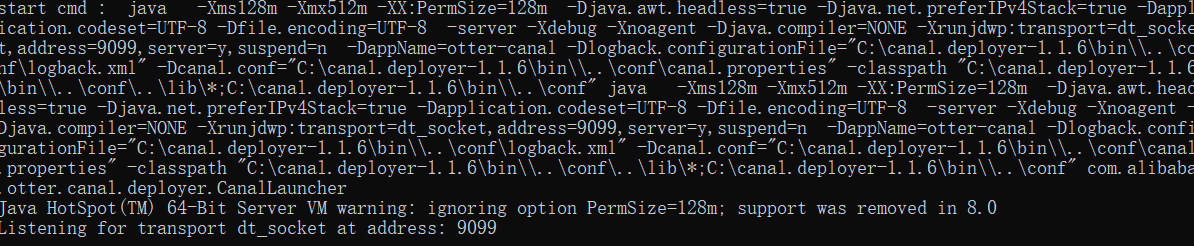

执行 - canal.deployer-1.1.6/bin/startup.bat (Windows) - canal.deployer-1.1.6/bin/startup.sh (Linux)

观察 canal.deployer-1.1.6/log/canal/canal.log 和 canal.deployer-1.1.6/log/example/example.log 是否有错误日志记录,如果没有任何报错信息,说明canal server启动成功!

6).pom.xml添加依赖

<properties>

<java.version>1.8</java.version>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<project.reporting.outputEncoding>UTF-8</project.reporting.outputEncoding>

<!--Springboot高版本和starter-canal版本不兼容,必须降到2.3.7.RELEASE-->

<spring-boot.version>2.3.7.RELEASE</spring-boot.version>

</properties>

<dependencies>

...

<!--注意这个依赖你从maven无法下载-->

<dependency>

<groupId>com.xpand</groupId>

<artifactId>starter-canal</artifactId>

<version>0.0.1-SNAPSHOT</version>

</dependency>

</dependencies>

解决:starter-canal 无法maven导入,大家可以执行以下命令,把jar包安装到本地仓库即可。

mvn install:install-file "-DgroupId=com.xpand" "-DartifactId=starter-canal" "-Dversion=0.0.1-SNAPSHOT" "-Dpackaging=jar" "-Dfile=starter-canal-0.0.1-SNAPSHOT.jar"

7).配置application.yml

canal:

server: 127.0.0.1:11111 #canal的地址

destination: example #默认的数据同步的目的地

client:

instances:

example:

host: 127.0.0.1

port: 11111

#数据源配置

spring:

# redis配置

redis:

# Redis数据库索引(默认为0)

database: 0

# Redis服务器地址

host: localhost

# Redis服务器连接端口

port: 6379

# Redis服务器连接密码(默认为空)

password: helloworld2021

# 连接超时时间(毫秒)

timeout: 1000ms

lettuce:

pool:

# 连接池最大连接数

max-active: 200

# 连接池最大阻塞等待时间(使用负值表示没有限制)

max-wait: -1ms

# 连接池中的最大空闲连接

max-idle: 10

# 连接池中的最小空闲连接

min-idle: 0

cache:

type: redis

8).启动类添加注解

@SpringBootApplication

@MapperScan(basePackages = {"com.simoniu.springboot.mapper"})

@EnableCaching //开启缓存支持

@EnableCanalClient //开启CanalClient支持

public class FirstdemoApplication {

public static void main(String[] args) {

SpringApplication.run(FirstdemoApplication.class, args);

}

}

9).在listener包下配置canal监听器

import com.alibaba.otter.canal.protocol.CanalEntry;

import com.hqyj.springboot.util.RedisUtil;

import com.xpand.starter.canal.annotation.*;

import javax.annotation.Resource;

@CanalEventListener

public class CanalDataEventListener {

@Resource

private RedisUtil redisUtil;

/**

* @Description: 增加数据监听

* @Author: yangjj_tc

* @Date: 2022/11/11 15:16

*/

@InsertListenPoint

public void onEventInsert(CanalEntry.EventType eventType, CanalEntry.RowData rowData) {

rowData.getAfterColumnsList()

.forEach((c) -> System.out.println("By--Annotation: " + c.getName() + " :: " + c.getValue()));

}

/**

* @Description: 修改数据监听

* @Author: yangjj_tc

* @Date: 2022/11/11 15:17

*/

@UpdateListenPoint

public void onEventUpdate(CanalEntry.RowData rowData) {

System.out.println("UpdateListenPoint");

rowData.getAfterColumnsList()

.forEach((c) -> System.out.println("By--Annotation: " + c.getName() + " :: " + c.getValue()));

}

/**

* @Description: 删除数据监听

* @Author: yangjj_tc

* @Date: 2022/11/11 15:17

*/

@DeleteListenPoint

public void onEventDelete(CanalEntry.EventType eventType) {

System.out.println("DeleteListenPoint");

}

/**

* @Description: 自定义数据监听

* @Author: yangjj_tc

* @Date: 2022/11/11 15:18

*/

@ListenPoint(destination = "example", schema = "springboot", table = {"users"},

eventType = CanalEntry.EventType.UPDATE)

public void onEventCustomUpdate(CanalEntry.EventType eventType, CanalEntry.RowData rowData) {

System.err.println("DeleteListenPoint");

rowData.getAfterColumnsList()

.forEach((c) -> System.out.println("By--Annotation: " + c.getName() + " :: " + c.getValue()));

}

@ListenPoint(destination = "example", schema = "springboot", // 所要监听的数据库名

table = {"users"}, // 所要监听的数据库表名

eventType = {CanalEntry.EventType.UPDATE, CanalEntry.EventType.INSERT, CanalEntry.EventType.DELETE})

public void onEventCustomUpdateForTbUser(CanalEntry.EventType eventType, CanalEntry.RowData rowData) {

//getChangeValue(eventType, rowData);

System.out.println("检测到users表触发了插入,更新或者删除操作.....");

rowData.getAfterColumnsList()

.forEach((c) -> System.out.println("By--Annotation: " + c.getName() + " :: " + c.getValue()));

System.out.println("-----------执行删除redis缓存--------");

redisUtil.removeByPrex("usersCacheList");

}

public static void getChangeValue(CanalEntry.EventType eventType, CanalEntry.RowData rowData) {

if (eventType == CanalEntry.EventType.DELETE) {

rowData.getBeforeColumnsList().forEach(column -> {

// 获取删除前的数据

System.out.println(column.getName() + " == " + column.getValue());

});

} else {

rowData.getBeforeColumnsList().forEach(column -> {

// 打印改变前的字段名和值

System.out.println(column.getName() + " == " + column.getValue());

});

rowData.getAfterColumnsList().forEach(column -> {

// 打印改变后的字段名和值

System.out.println(column.getName() + " == " + column.getValue());

});

}

}

}

10).启动项目测试数据是否同步

尝试修改 users表里的一条记录。

检测到users表触发了插入,更新或者删除操作.....

By--Annotation: uid :: 32

By--Annotation: username :: 王老二

By--Annotation: password :: 123456

By--Annotation: gender :: 男

By--Annotation: birthday :: 2023-05-01

By--Annotation: address ::

By--Annotation: create_time :: 2023-05-11 14:21:31

By--Annotation: flag :: 0

By--Annotation: modify_time :: 2023-05-11 14:21:31

By--Annotation: version :: 1

By--Annotation: mobile ::

-----------执行删除redis缓存--------