HBase基础教程(十六)

Hbase JMX监控。

Hbase JMX监控。

1.Hbase JMX监控实例

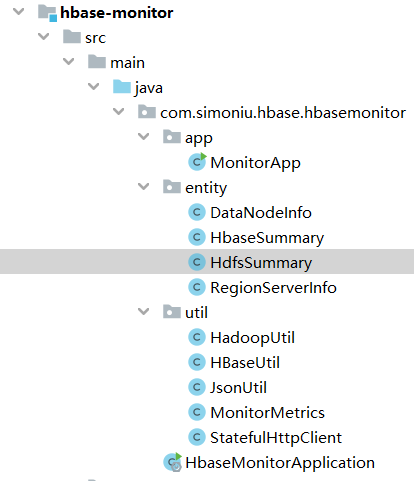

项目结构:

Hbase JMX实体类。

//HbaseSummary.java

package com.simoniu.hbase.hbasemonitor.entity;

import java.util.Date;

import java.util.List;

/**

* Created by jixin on 18-3-24.

*/

public class HbaseSummary {

//"name": "Hadoop:service=HBase,name=Master,sub=Server"

private String hmasterNode;

private String status;

//处于可用状态的regionserver汇总

private List<RegionServerInfo> liveRegionServers;

//处于不可用状态的regionserver汇总

private List<RegionServerInfo> deadRegionServers;

//处于可用状态的regionserver数量

private int numRegionServers;

//处于不可用状态的regionserver数量

private int numDeadRegionServers;

private Date createTime;

public void printInfo() {

System.out.println("HBASE SUMMARY INFO");

System.out.println(String

.format("numRegionServers:%d\nnumDeadRegionServers%d\n", numRegionServers,

numDeadRegionServers));

liveRegionServers.forEach(regionServerInfo -> {

System.out.println(String

.format("hostName:%s\nregionCount:%s", regionServerInfo.getHostName(),

regionServerInfo.getRegionCount()));

});

System.out.println("----------------------");

}

public String getHmasterNode() {

return hmasterNode;

}

public void setHmasterNode(String hmasterNode) {

this.hmasterNode = hmasterNode;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public List<RegionServerInfo> getLiveRegionServers() {

return liveRegionServers;

}

public void setLiveRegionServers(

List<RegionServerInfo> liveRegionServers) {

this.liveRegionServers = liveRegionServers;

}

public List<RegionServerInfo> getDeadRegionServers() {

return deadRegionServers;

}

public void setDeadRegionServers(

List<RegionServerInfo> deadRegionServers) {

this.deadRegionServers = deadRegionServers;

}

public int getNumRegionServers() {

return numRegionServers;

}

public void setNumRegionServers(int numRegionServers) {

this.numRegionServers = numRegionServers;

}

public int getNumDeadRegionServers() {

return numDeadRegionServers;

}

public void setNumDeadRegionServers(int numDeadRegionServers) {

this.numDeadRegionServers = numDeadRegionServers;

}

public Date getCreateTime() {

return createTime;

}

public void setCreateTime(Date createTime) {

this.createTime = createTime;

}

}

//RegionServerInfo.java

package com.simoniu.hbase.hbasemonitor.entity;

/**

* Created by jixin on 18-3-24.

*/

public class RegionServerInfo {

//regionserver的hostname

private String hostName;

//"name" : "Hadoop:service=HBase,name=RegionServer,sub=Server"

//Store个数

private int storeCount;

//Regionserver管理region数量

private int regionCount;

//Storefile个数

private int storeFileCount;

//Memstore大小

private double memStoreSize;

//Storefile大小

private double storeFileSize;

//该regionserver所管理的表索引大小

private double staticIndexSize;

//总请求数

private int totalRequestCount;

//读请求数

private int readRequestCount;

//写请求数

private int writeRequestCount;

//合并cell个数

private int compactedCellsCount;

//大合并cell个数

private int majorCompactedCellsCount;

//flush到磁盘的大小

private double flushedCellsSize;

//因memstore大于阈值而引发flush的次数

private int blockedRequestCount;

//region分裂请求次数

private int splitRequestCount;

//region分裂成功次数

private int splitSuccessCount;

//请求完成时间超过1000ms的次数

private int slowGetCount;

//"name" : "Hadoop:service=HBase,name=RegionServer,sub=IPC"

//该regionserver打开的连接数

private int numOpenConnections;

//rpc handler数

private int numActiveHandler;

//收到数据量 GB

private double sentBytes;

//发出数据量 GB

private double receivedBytes;

public String getHostName() {

return hostName;

}

public void setHostName(String hostName) {

this.hostName = hostName;

}

public int getStoreCount() {

return storeCount;

}

public void setStoreCount(int storeCount) {

this.storeCount = storeCount;

}

public int getRegionCount() {

return regionCount;

}

public void setRegionCount(int regionCount) {

this.regionCount = regionCount;

}

public int getStoreFileCount() {

return storeFileCount;

}

public void setStoreFileCount(int storeFileCount) {

this.storeFileCount = storeFileCount;

}

public double getMemStoreSize() {

return memStoreSize;

}

public void setMemStoreSize(double memStoreSize) {

this.memStoreSize = memStoreSize;

}

public double getStoreFileSize() {

return storeFileSize;

}

public void setStoreFileSize(double storeFileSize) {

this.storeFileSize = storeFileSize;

}

public double getStaticIndexSize() {

return staticIndexSize;

}

public void setStaticIndexSize(double staticIndexSize) {

this.staticIndexSize = staticIndexSize;

}

public int getTotalRequestCount() {

return totalRequestCount;

}

public void setTotalRequestCount(int totalRequestCount) {

this.totalRequestCount = totalRequestCount;

}

public int getReadRequestCount() {

return readRequestCount;

}

public void setReadRequestCount(int readRequestCount) {

this.readRequestCount = readRequestCount;

}

public int getWriteRequestCount() {

return writeRequestCount;

}

public void setWriteRequestCount(int writeRequestCount) {

this.writeRequestCount = writeRequestCount;

}

public int getCompactedCellsCount() {

return compactedCellsCount;

}

public void setCompactedCellsCount(int compactedCellsCount) {

this.compactedCellsCount = compactedCellsCount;

}

public int getMajorCompactedCellsCount() {

return majorCompactedCellsCount;

}

public void setMajorCompactedCellsCount(int majorCompactedCellsCount) {

this.majorCompactedCellsCount = majorCompactedCellsCount;

}

public double getFlushedCellsSize() {

return flushedCellsSize;

}

public void setFlushedCellsSize(double flushedCellsSize) {

this.flushedCellsSize = flushedCellsSize;

}

public int getBlockedRequestCount() {

return blockedRequestCount;

}

public void setBlockedRequestCount(int blockedRequestCount) {

this.blockedRequestCount = blockedRequestCount;

}

public int getSplitRequestCount() {

return splitRequestCount;

}

public void setSplitRequestCount(int splitRequestCount) {

this.splitRequestCount = splitRequestCount;

}

public int getSplitSuccessCount() {

return splitSuccessCount;

}

public void setSplitSuccessCount(int splitSuccessCount) {

this.splitSuccessCount = splitSuccessCount;

}

public int getSlowGetCount() {

return slowGetCount;

}

public void setSlowGetCount(int slowGetCount) {

this.slowGetCount = slowGetCount;

}

public int getNumOpenConnections() {

return numOpenConnections;

}

public void setNumOpenConnections(int numOpenConnections) {

this.numOpenConnections = numOpenConnections;

}

public int getNumActiveHandler() {

return numActiveHandler;

}

public void setNumActiveHandler(int numActiveHandler) {

this.numActiveHandler = numActiveHandler;

}

public double getSentBytes() {

return sentBytes;

}

public void setSentBytes(double sentBytes) {

this.sentBytes = sentBytes;

}

public double getReceivedBytes() {

return receivedBytes;

}

public void setReceivedBytes(double receivedBytes) {

this.receivedBytes = receivedBytes;

}

}

相关工具类与上小节完全一样。

Hbase JMX工具类。

package com.simoniu.hbase.hbasemonitor.util;

import java.io.IOException;

import java.text.DecimalFormat;

import java.util.ArrayList;

import java.util.Date;

import java.util.List;

import com.simoniu.hbase.hbasemonitor.entity.HbaseSummary;

import com.simoniu.hbase.hbasemonitor.entity.RegionServerInfo;

/**

* Created by jixin on 18-3-24.

*/

public class HBaseUtil {

public static long mbLength = 1048576L;

public static long gbLength = 1073741824L;

public static final String jmxServerUrlFormat = "%s/jmx?qry=%s";

public static final String hbaseRegionJmxUrlFormat = "http://%s:%s/jmx?qry=%s";

public static final String hbaseJmxServerUrl = "http://hadoop-master:16010";

//注意:regionServer的管理端口已经改为16030了。

public static final String hbaseRegionServerJmxPort = "16030";

public static final String masterServer = "Hadoop:service=HBase,name=Master,sub=Server";

public static final String regionServer = "Hadoop:service=HBase,name=RegionServer,sub=Server";

public static final String regionIpc = "Hadoop:service=HBase,name=RegionServer,sub=IPC";

public static HbaseSummary getHbaseSummary(StatefulHttpClient client)throws IOException{

HbaseSummary hbaseSummary = new HbaseSummary();

String hmasterUrl= String.format(jmxServerUrlFormat,hbaseJmxServerUrl,masterServer);

System.out.println("------------getHbaseSummary=>hmasterUrl--------------");

System.out.println(hmasterUrl);

MonitorMetrics monitorMetrics = client.get(MonitorMetrics.class,hmasterUrl,null,null);

hbaseSummary

.setNumDeadRegionServers((int) monitorMetrics.getMetricsValue("numDeadRegionServers"));

hbaseSummary.setNumRegionServers((int) monitorMetrics.getMetricsValue("numRegionServers"));

String liveRegionServers = monitorMetrics.getMetricsValue("tag.liveRegionServers")

.toString();

hbaseSummary.setLiveRegionServers(regionServerInfoReader(client, liveRegionServers, true));

String deadRegionServers = monitorMetrics.getMetricsValue("tag.deadRegionServers")

.toString();

hbaseSummary.setDeadRegionServers(regionServerInfoReader(client, deadRegionServers, false));

hbaseSummary.setHmasterNode("localhost");

return hbaseSummary;

}

public static List<RegionServerInfo> regionServerInfoReader(StatefulHttpClient client,

String regionServerStr, boolean getInfo) {

List<RegionServerInfo> regionServerInfos = new ArrayList<>();

Date nowDate = new Date();

for (String info : regionServerStr.split(";")) {

String hostName = info.split(",")[0];

RegionServerInfo regionServerInfo = new RegionServerInfo();

if (getInfo) {

try {

String regionServerUrl = String

.format(hbaseRegionJmxUrlFormat, hostName,

hbaseRegionServerJmxPort, regionServer);

MonitorMetrics hadoopMetrics = client

.get(MonitorMetrics.class, regionServerUrl, null, null);

regionServerInfo.setHostName(hostName);

regionServerInfo.setStoreCount((int) hadoopMetrics.getMetricsValue("storeCount"));

regionServerInfo.setRegionCount((int) hadoopMetrics.getMetricsValue("regionCount"));

regionServerInfo.setStoreFileCount((int) hadoopMetrics.getMetricsValue("storeFileCount"));

regionServerInfo.setMemStoreSize(

(int) hadoopMetrics.getMetricsValue("memStoreSize") / mbLength);

regionServerInfo.setStoreFileSize(

doubleFormat(hadoopMetrics.getMetricsValue("storeFileSize"),

gbLength));

regionServerInfo

.setStaticIndexSize((int) hadoopMetrics.getMetricsValue("staticIndexSize"));

regionServerInfo

.setTotalRequestCount((int) hadoopMetrics.getMetricsValue("totalRequestCount"));

regionServerInfo

.setReadRequestCount((int) hadoopMetrics.getMetricsValue("readRequestCount"));

regionServerInfo

.setWriteRequestCount((int) hadoopMetrics.getMetricsValue("writeRequestCount"));

regionServerInfo

.setCompactedCellsCount((int) hadoopMetrics.getMetricsValue("compactedCellsCount"));

regionServerInfo.setMajorCompactedCellsCount(

(int) hadoopMetrics.getMetricsValue("majorCompactedCellsCount"));

regionServerInfo.setFlushedCellsSize(

doubleFormat(hadoopMetrics.getMetricsValue("flushedCellsSize"),

gbLength));

regionServerInfo

.setBlockedRequestCount((int) hadoopMetrics.getMetricsValue("blockedRequestCount"));

regionServerInfo

.setSplitRequestCount((int) hadoopMetrics.getMetricsValue("splitRequestCount"));

regionServerInfo

.setSplitSuccessCount((int) hadoopMetrics.getMetricsValue("splitSuccessCount"));

regionServerInfo.setSlowGetCount((int) hadoopMetrics.getMetricsValue("slowGetCount"));

} catch (IOException e) {

e.printStackTrace();

}

try {

String regionIpcUrl = String

.format(hbaseRegionJmxUrlFormat, hostName,

hbaseRegionServerJmxPort, regionIpc);

MonitorMetrics hadoopMetrics = client

.get(MonitorMetrics.class, regionIpcUrl, null, null);

regionServerInfo

.setNumOpenConnections((int) hadoopMetrics.getMetricsValue("numOpenConnections"));

regionServerInfo

.setNumActiveHandler((int) hadoopMetrics.getMetricsValue("numActiveHandler"));

regionServerInfo.setSentBytes(

doubleFormat(hadoopMetrics.getMetricsValue("sentBytes"), gbLength));

regionServerInfo.setReceivedBytes(

doubleFormat(hadoopMetrics.getMetricsValue("receivedBytes"),

gbLength));

} catch (IOException e) {

e.printStackTrace();

}

} else {

regionServerInfo = new RegionServerInfo();

regionServerInfo.setHostName(hostName);

}

regionServerInfos.add(regionServerInfo);

}

return regionServerInfos;

}

public static DecimalFormat df = new DecimalFormat("#.##");

public static double doubleFormat(Object num, long unit) {

double result = Double.parseDouble(String.valueOf(num)) / unit;

return Double.parseDouble(df.format(result));

}

public static double doubleFormat(Object num) {

double result = Double.parseDouble(String.valueOf(num));

return Double.parseDouble(df.format(result));

}

}

测试类。

package com.simoniu.hbase.hbasemonitor.app;

import com.simoniu.hbase.hbasemonitor.util.HBaseUtil;

import com.simoniu.hbase.hbasemonitor.util.HadoopUtil;

import com.simoniu.hbase.hbasemonitor.util.StatefulHttpClient;

import java.io.IOException;

/**

* Created by jixin on 18-3-24.

*/

public class MonitorApp {

public static void main(String[] args) throws IOException {

StatefulHttpClient client = new StatefulHttpClient(null);

HBaseUtil.getHbaseSummary(client).printInfo();

}

}

运行效果:

HBASE SUMMARY INFO

numRegionServers:1

numDeadRegionServers0

hostName:hadoop-master

regionCount:6

----------------------