HBase基础教程(十五)

Hadoop JMX监控

Hadoop JMX监控。

1.什么是JMX?

JMX(Java Management Extensions)是一种Java平台的管理和监控API,它提供了一种可插拔的框架,使得Java应用程序、设备、系统等都可以通过一个统一的接口进行管理和监控。

JMX有以下主要角色:

- MBean Server:MBean服务器,用于管理和监控MBean。

- MBean:管理Bean,用于封装Java应用程序中的管理和监控数据。

- MBean Client:MBean客户端,用于与MBean Server进行通信。

2.Hadoop JMX监控实例

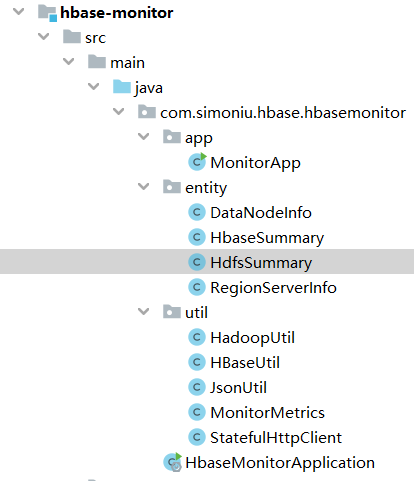

项目结构:

Hadoop JMX监控案例:

Hadoop JMX实体类。

//DataNodeInfo.java

package com.simoniu.hbase.hbasemonitor.entity;

/**

* Created by jixin on 18-3-24.

*/

public class DataNodeInfo {

//datanode的hostname

private String nodeName;

//datanode的ip地址

private String nodeAddr;

//datanode的上次链接数量

private int lastContact;

//datanode上hdfs的已用空间 GB

private double usedSpace;

//datanode的状态

private String adminState;

//datanode上非hdfs的空间大小 GB

private double nonDfsUsedSpace;

//datanode上的总空间大小

private double capacity;

//datanode的block

private int numBlocks;

private double remaining;

private double blockPoolUsed;

private double blockPoolUsedPerent;

public String getNodeName() {

return nodeName;

}

public void setNodeName(String nodeName) {

this.nodeName = nodeName;

}

public String getNodeAddr() {

return nodeAddr;

}

public void setNodeAddr(String nodeAddr) {

this.nodeAddr = nodeAddr;

}

public int getLastContact() {

return lastContact;

}

public void setLastContact(int lastContact) {

this.lastContact = lastContact;

}

public double getUsedSpace() {

return usedSpace;

}

public void setUsedSpace(double usedSpace) {

this.usedSpace = usedSpace;

}

public String getAdminState() {

return adminState;

}

public void setAdminState(String adminState) {

this.adminState = adminState;

}

public double getNonDfsUsedSpace() {

return nonDfsUsedSpace;

}

public void setNonDfsUsedSpace(double nonDfsUsedSpace) {

this.nonDfsUsedSpace = nonDfsUsedSpace;

}

public double getCapacity() {

return capacity;

}

public void setCapacity(double capacity) {

this.capacity = capacity;

}

public int getNumBlocks() {

return numBlocks;

}

public void setNumBlocks(int numBlocks) {

this.numBlocks = numBlocks;

}

public double getRemaining() {

return remaining;

}

public void setRemaining(double remaining) {

this.remaining = remaining;

}

public double getBlockPoolUsed() {

return blockPoolUsed;

}

public void setBlockPoolUsed(double blockPoolUsed) {

this.blockPoolUsed = blockPoolUsed;

}

public double getBlockPoolUsedPerent() {

return blockPoolUsedPerent;

}

public void setBlockPoolUsedPerent(double blockPoolUsedPerent) {

this.blockPoolUsedPerent = blockPoolUsedPerent;

}

}

//HdfsSummary.java

package com.simoniu.hbase.hbasemonitor.entity;

import java.util.List;

/**

* Created by jixin on 18-3-24.

*/

public class HdfsSummary {

//"name": "Hadoop:service=NameNode,name=NameNodeInfo"

//总空间大小 GB

private double total;

//hdfs已使用的空间大小 GB

private double dfsUsed;

//hdfs已使用空间百分比

private double percentUsed;

//hdfs空闲空间 GB

private double dfsFree;

//hdfs是否处于safemode

private String safeMode;

//非hdfs空间大小 GB

private double nonDfsUsed;

//集群该namespace的hdfs使用容量大小

private double blockPoolUsedSpace;

//集群该namespace的hdfs使用容量所占百分比

private double percentBlockPoolUsed;

private double percentRemaining;

//集群总的block数

private int totalBlocks;

//集群总的文件数

private int totalFiles;

//集群丢失的block数量

private int missingBlocks;

//处于可用状态的datanode汇总

private List<DataNodeInfo> liveDataNodeInfos;

//处于不可用状态的datanode汇总

private List<DataNodeInfo> deadDataNodeInfos;

//"name": "Hadoop:service=NameNode,name=FSNamesystemState"

//处于可用状态的datanode数量

private int numLiveDataNodes;

//处于不可用状态的datanode数量

private int numDeadDataNodes;

//坏盘的数量

private int volumeFailuresTotal;

public void printInfo() {

System.out.println("HDFS SUMMARY INFO");

System.out.println(String

.format("totalBlocks:%s\ntotalFiles:%s\nnumLiveDataNodes:%s", totalBlocks, totalFiles,

numLiveDataNodes));

liveDataNodeInfos.forEach(node -> {

System.out.println(

String.format("nodeName:%s\nnumBlocks:%s", node.getNodeName(), node.getNumBlocks()));

});

System.out.println("----------------------");

}

public double getTotal() {

return total;

}

public void setTotal(double total) {

this.total = total;

}

public double getDfsUsed() {

return dfsUsed;

}

public void setDfsUsed(double dfsUsed) {

this.dfsUsed = dfsUsed;

}

public double getPercentUsed() {

return percentUsed;

}

public void setPercentUsed(double percentUsed) {

this.percentUsed = percentUsed;

}

public double getDfsFree() {

return dfsFree;

}

public void setDfsFree(double dfsFree) {

this.dfsFree = dfsFree;

}

public String getSafeMode() {

return safeMode;

}

public void setSafeMode(String safeMode) {

this.safeMode = safeMode;

}

public double getNonDfsUsed() {

return nonDfsUsed;

}

public void setNonDfsUsed(double nonDfsUsed) {

this.nonDfsUsed = nonDfsUsed;

}

public double getBlockPoolUsedSpace() {

return blockPoolUsedSpace;

}

public void setBlockPoolUsedSpace(double blockPoolUsedSpace) {

this.blockPoolUsedSpace = blockPoolUsedSpace;

}

public double getPercentBlockPoolUsed() {

return percentBlockPoolUsed;

}

public void setPercentBlockPoolUsed(double percentBlockPoolUsed) {

this.percentBlockPoolUsed = percentBlockPoolUsed;

}

public double getPercentRemaining() {

return percentRemaining;

}

public void setPercentRemaining(double percentRemaining) {

this.percentRemaining = percentRemaining;

}

public int getTotalBlocks() {

return totalBlocks;

}

public void setTotalBlocks(int totalBlocks) {

this.totalBlocks = totalBlocks;

}

public int getTotalFiles() {

return totalFiles;

}

public void setTotalFiles(int totalFiles) {

this.totalFiles = totalFiles;

}

public int getMissingBlocks() {

return missingBlocks;

}

public void setMissingBlocks(int missingBlocks) {

this.missingBlocks = missingBlocks;

}

public List<DataNodeInfo> getLiveDataNodeInfos() {

return liveDataNodeInfos;

}

public void setLiveDataNodeInfos(

List<DataNodeInfo> liveDataNodeInfos) {

this.liveDataNodeInfos = liveDataNodeInfos;

}

public List<DataNodeInfo> getDeadDataNodeInfos() {

return deadDataNodeInfos;

}

public void setDeadDataNodeInfos(

List<DataNodeInfo> deadDataNodeInfos) {

this.deadDataNodeInfos = deadDataNodeInfos;

}

public int getNumLiveDataNodes() {

return numLiveDataNodes;

}

public void setNumLiveDataNodes(int numLiveDataNodes) {

this.numLiveDataNodes = numLiveDataNodes;

}

public int getNumDeadDataNodes() {

return numDeadDataNodes;

}

public void setNumDeadDataNodes(int numDeadDataNodes) {

this.numDeadDataNodes = numDeadDataNodes;

}

public int getVolumeFailuresTotal() {

return volumeFailuresTotal;

}

public void setVolumeFailuresTotal(int volumeFailuresTotal) {

this.volumeFailuresTotal = volumeFailuresTotal;

}

}

相关工具类。

//JsonUtil.java

package com.simoniu.hbase.hbasemonitor.util;

import java.io.IOException;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import com.fasterxml.jackson.databind.DeserializationFeature;

import com.fasterxml.jackson.databind.ObjectMapper;

public class JsonUtil {

private static ObjectMapper objMapper = new ObjectMapper();

static {

objMapper.configure(DeserializationFeature.FAIL_ON_UNKNOWN_PROPERTIES,

false);

objMapper.configure(DeserializationFeature.FAIL_ON_IGNORED_PROPERTIES,

false);

}

public static String toJson(Object obj) throws IOException {

return objMapper.writeValueAsString(obj);

}

public static String toJson(Object obj, boolean prettyFormat) throws IOException {

String json = objMapper.writeValueAsString(obj);

if (prettyFormat) {

Object jsonObj = objMapper.readValue(json, Object.class);

json = objMapper.writerWithDefaultPrettyPrinter().writeValueAsString(jsonObj);

}

return json;

}

public static <T> T fromJson(Class<T> clazz, String json)

throws IOException {

return objMapper.readValue(json, clazz);

}

public static <T> List<T> fromJsonList(Class<T> clazz, String json)

throws IOException {

return objMapper.readValue(json, objMapper.getTypeFactory()

.constructCollectionType(List.class, clazz));

}

public static <K, V> Map<K, V> fromJsonMap(Class<K> keyClazz, Class<V> valueClazz, String json)

throws IOException {

return objMapper.readValue(json, objMapper.getTypeFactory()

.constructMapType(HashMap.class, keyClazz, valueClazz));

}

}

//MonitorMetrics.java

package com.simoniu.hbase.hbasemonitor.util;

import java.util.ArrayList;

import java.util.List;

import java.util.Map;

/**

* Created by jixin on 18-3-24.

*/

public class MonitorMetrics {

List<Map<String, Object>> beans = new ArrayList<>();

public List<Map<String, Object>> getBeans() {

return beans;

}

public void setBeans(List<Map<String, Object>> beans) {

this.beans = beans;

}

public Object getMetricsValue(String name) {

if (beans.isEmpty()) {

return null;

}

return beans.get(0).getOrDefault(name, null);

}

}

//StatefulHttpClient.java

package com.simoniu.hbase.hbasemonitor.util;

import java.io.BufferedInputStream;

import java.io.BufferedOutputStream;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.net.URI;

import java.util.List;

import java.util.Map;

import org.apache.http.HttpEntity;

import org.apache.http.HttpHost;

import org.apache.http.HttpResponse;

import org.apache.http.client.CookieStore;

import org.apache.http.client.config.CookieSpecs;

import org.apache.http.client.config.RequestConfig;

import org.apache.http.client.methods.HttpEntityEnclosingRequestBase;

import org.apache.http.client.methods.HttpGet;

import org.apache.http.client.methods.HttpPost;

import org.apache.http.client.protocol.HttpClientContext;

import org.apache.http.client.utils.URIBuilder;

import org.apache.http.config.Registry;

import org.apache.http.config.RegistryBuilder;

import org.apache.http.conn.util.PublicSuffixMatcher;

import org.apache.http.conn.util.PublicSuffixMatcherLoader;

import org.apache.http.cookie.CookieSpecProvider;

import org.apache.http.entity.ContentType;

import org.apache.http.entity.StringEntity;

import org.apache.http.entity.mime.HttpMultipartMode;

import org.apache.http.entity.mime.MultipartEntityBuilder;

import org.apache.http.impl.client.BasicCookieStore;

import org.apache.http.impl.client.CloseableHttpClient;

import org.apache.http.impl.client.HttpClientBuilder;

import org.apache.http.impl.cookie.DefaultCookieSpecProvider;

import org.apache.http.impl.cookie.RFC6265CookieSpecProvider;

import org.apache.http.util.EntityUtils;

/**

* user stated http client.

*/

public class StatefulHttpClient {

private HttpClientContext context;

private CloseableHttpClient httpclient;

private int requestTimeOut = 15;

private int sessionTimeOut = 60;

public StatefulHttpClient(int sessionTimeOut, int requestTimeOut,

HttpHost proxy) {

initCookieStore();

this.sessionTimeOut = sessionTimeOut;

this.requestTimeOut = requestTimeOut;

RequestConfig.Builder requestConfigBuilder = RequestConfig.custom()

.setConnectTimeout(this.requestTimeOut * 1000).setSocketTimeout(this.requestTimeOut * 1000);

if (proxy != null) {

requestConfigBuilder.setProxy(proxy);

}

httpclient = HttpClientBuilder.create()

.setDefaultRequestConfig(requestConfigBuilder.build()).build();

}

public StatefulHttpClient(HttpHost proxy) {

initCookieStore();

RequestConfig.Builder requestConfigBuilder = RequestConfig.custom()

.setConnectTimeout(10000).setSocketTimeout(this.requestTimeOut * 1000);

if (proxy != null) {

requestConfigBuilder.setProxy(proxy);

}

httpclient = HttpClientBuilder.create()

.setDefaultRequestConfig(requestConfigBuilder.build()).build();

}

public int getRequestTimeOut() {

return requestTimeOut;

}

public void setRequestTimeOut(int requestTimeOut) {

this.requestTimeOut = requestTimeOut;

}

public int getSessionTimeOut() {

return sessionTimeOut;

}

public void setSessionTimeOut(int sessionTimeOut) {

this.sessionTimeOut = sessionTimeOut;

}

private void initCookieStore() {

PublicSuffixMatcher publicSuffixMatcher = PublicSuffixMatcherLoader.getDefault();

Registry<CookieSpecProvider> cookieSpecReg = RegistryBuilder.<CookieSpecProvider>create()

.register(CookieSpecs.DEFAULT, new DefaultCookieSpecProvider(publicSuffixMatcher))

.register(CookieSpecs.STANDARD, new RFC6265CookieSpecProvider(publicSuffixMatcher)).build();

CookieStore cookieStore = new BasicCookieStore();

context = HttpClientContext.create();

context.setCookieSpecRegistry(cookieSpecReg);

context.setCookieStore(cookieStore);

}

/**

* http get.

*

* @param clazz clazz

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param <T> T

* @return T

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public <T> T get(Class<T> clazz, String url,

Map<String, String> queryParam, Map<String, String> headers) throws IOException {

HttpGet get = new HttpGet(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

get.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(get.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

get.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(get, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

String result = EntityUtils.toString(response.getEntity());

if (statusCode == 4 || statusCode == 5) {

throw new IOException(result);

}

if (String.class.equals(clazz)) {

return (T) result;

}

if (result == null || result.length() == 0) {

return null;

}

return JsonUtil.fromJson(clazz, result);

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

/**

* http post.

*

* @param clazz clazz

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param <T> T

* @return T

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public <T> T post(Class<T> clazz, String url,

Map<String, String> queryParam, Map<String, String> headers,

String body) throws IOException {

HttpPost post = new HttpPost(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

post.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(post.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

post.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

if (body != null) {

HttpEntity entity = new StringEntity(body,

ContentType.APPLICATION_JSON);

post.setEntity(entity);

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(post, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

String result = EntityUtils.toString(response.getEntity());

if (statusCode == 4 || statusCode == 5) {

throw new IOException(result);

}

if (Void.class.equals(clazz)) {

return null;

}

if (String.class.equals(clazz)) {

return (T) result;

}

if (result == null || result.length() == 0) {

return null;

}

return JsonUtil.fromJson(clazz, result);

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

/**

* http upload.

*

* @param clazz clazz

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param filePaths filepaths

* @param <T> T

* @return T

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public <T> T upload(Class<T> clazz, String url,

Map<String, String> queryParam, Map<String, String> headers, List<String> filePaths)

throws IOException {

HttpPost post = new HttpPost(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

post.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(post.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

post.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

if (filePaths != null) {

MultipartEntityBuilder builder = MultipartEntityBuilder.create();

builder.setMode(HttpMultipartMode.BROWSER_COMPATIBLE);

for (String path : filePaths) {

File file = new File(path);

builder.addBinaryBody("file", file, ContentType.DEFAULT_BINARY, file.getName());

}

HttpEntity entity = builder.build();

post.setEntity(entity);

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(post, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

String result = EntityUtils.toString(response.getEntity());

if (statusCode == 4 || statusCode == 5) {

throw new IOException(result);

}

if (String.class.equals(clazz)) {

return (T) result;

}

if (result == null || result.length() == 0) {

return null;

}

return JsonUtil.fromJson(clazz, result);

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

/**

* http download.

*

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param filePath filepath

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public void download(String url,

Map<String, String> queryParam, Map<String, String> headers, String filePath)

throws IOException {

HttpPost post = new HttpPost(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

post.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(post.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

post.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(post, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

if (statusCode == 4 || statusCode == 5) {

throw new IOException("");

}

BufferedInputStream bis = new BufferedInputStream(entity.getContent());

BufferedOutputStream bos = new BufferedOutputStream(new FileOutputStream(new File(filePath)));

int inByte;

while ((inByte = bis.read()) != -1) {

bos.write(inByte);

}

bis.close();

bos.close();

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

/**

* http downloadzip.

*

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param body body

* @param filePath filepath

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public void downloadzip(String url,

Map<String, String> queryParam, Map<String, String> headers, String body, String filePath)

throws IOException {

HttpPost post = new HttpPost(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

post.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(post.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

post.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

if (body != null) {

HttpEntity entity = new StringEntity(body,

ContentType.APPLICATION_JSON);

post.setEntity(entity);

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(post, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

if (statusCode == 4 || statusCode == 5) {

throw new IOException("");

}

BufferedInputStream bis = new BufferedInputStream(entity.getContent());

BufferedOutputStream bos = new BufferedOutputStream(new FileOutputStream(new File(filePath)));

int inByte;

while ((inByte = bis.read()) != -1) {

bos.write(inByte);

}

bis.close();

bos.close();

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

/**

* http delete.

*

* @param clazz clazz

* @param url url

* @param queryParam queryParam

* @param headers headers

* @param <T> T

* @return T

* @throws IOException e

*/

@SuppressWarnings("unchecked")

public <T> T delete(Class<T> clazz, String url,

Map<String, String> queryParam, Map<String, String> headers,

String body) throws IOException {

GavialHttpDelete delete = new GavialHttpDelete(url);

if (headers != null && headers.size() > 0) {

for (String key : headers.keySet()) {

delete.setHeader(key, headers.get(key));

}

}

try {

if (queryParam != null && queryParam.size() > 0) {

URIBuilder builder = new URIBuilder(delete.getURI());

for (String key : queryParam.keySet()) {

builder.addParameter(key, queryParam.get(key));

}

delete.setURI(builder.build());

}

} catch (Exception exception) {

exception.printStackTrace();

}

if (body != null) {

HttpEntity entity = new StringEntity(body,

ContentType.APPLICATION_JSON);

delete.setEntity(entity);

}

HttpEntity entity = null;

try {

HttpResponse response = httpclient.execute(delete, context);

int statusCode = response.getStatusLine().getStatusCode() / 100;

entity = response.getEntity();

String result = EntityUtils.toString(response.getEntity());

if (statusCode == 4 || statusCode == 5) {

throw new IOException(result);

}

if (String.class.equals(clazz)) {

return (T) result;

}

if (result == null || result.length() == 0) {

return null;

}

return JsonUtil.fromJson(clazz, result);

} finally {

if (entity != null) {

EntityUtils.consume(entity);

}

}

}

private static class GavialHttpDelete extends HttpEntityEnclosingRequestBase {

static final String METHOD_NAME = "DELETE";

GavialHttpDelete(final String uri) {

super();

setURI(URI.create(uri));

}

public GavialHttpDelete(final URI uri) {

super();

setURI(uri);

}

public GavialHttpDelete() {

super();

}

public String getMethod() {

return METHOD_NAME;

}

}

}

Hadoop JMX 工具类。

package com.simoniu.hbase.hbasemonitor.util;

import java.io.IOException;

import java.text.DecimalFormat;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Map.Entry;

import com.simoniu.hbase.hbasemonitor.entity.DataNodeInfo;

import com.simoniu.hbase.hbasemonitor.entity.HdfsSummary;

/**

* Created by jixin on 18-3-24.

*/

public class HadoopUtil {

public static long mbLength = 1048576L;

public static long gbLength = 1073741824L;

public static final String hadoopJmxServerUrl = "http://hadoop-master:50070";

public static final String jmxServerUrlFormat = "%s/jmx?qry=%s";

public static final String nameNodeInfo = "Hadoop:service=NameNode,name=NameNodeInfo";

public static final String fsNameSystemState = "Hadoop:service=NameNode,"

+ "name=FSNamesystemState";

public static HdfsSummary getHdfsSummary(StatefulHttpClient client) throws IOException {

HdfsSummary hdfsSummary = new HdfsSummary();

String namenodeUrl = String.format(jmxServerUrlFormat, hadoopJmxServerUrl, nameNodeInfo);

MonitorMetrics monitorMetrics = client.get(MonitorMetrics.class, namenodeUrl, null, null);

hdfsSummary.setTotal(doubleFormat(monitorMetrics.getMetricsValue("Total"), gbLength));

hdfsSummary.setDfsFree(doubleFormat(monitorMetrics.getMetricsValue("Free"), gbLength));

hdfsSummary

.setDfsUsed(doubleFormat(monitorMetrics.getMetricsValue("Used"), gbLength));

hdfsSummary.setPercentUsed(

doubleFormat(monitorMetrics.getMetricsValue("PercentUsed")));

hdfsSummary.setSafeMode(monitorMetrics.getMetricsValue("Safemode").toString());

hdfsSummary.setNonDfsUsed(

doubleFormat(monitorMetrics.getMetricsValue("NonDfsUsedSpace"), gbLength));

hdfsSummary.setBlockPoolUsedSpace(

doubleFormat(monitorMetrics.getMetricsValue("BlockPoolUsedSpace"),

gbLength));

hdfsSummary

.setPercentBlockPoolUsed(

doubleFormat(monitorMetrics.getMetricsValue("PercentBlockPoolUsed")));

hdfsSummary.setPercentRemaining(

doubleFormat(monitorMetrics.getMetricsValue("PercentRemaining")));

hdfsSummary.setTotalBlocks((int) monitorMetrics.getMetricsValue("TotalBlocks"));

hdfsSummary.setTotalFiles((int) monitorMetrics.getMetricsValue("TotalFiles"));

hdfsSummary.setMissingBlocks((int) monitorMetrics.getMetricsValue("NumberOfMissingBlocks"));

String liveNodesJson = monitorMetrics.getMetricsValue("LiveNodes").toString();

String deadNodesJson = monitorMetrics.getMetricsValue("DeadNodes").toString();

List<DataNodeInfo> liveNodes = dataNodeInfoReader(liveNodesJson);

List<DataNodeInfo> deadNodes = dataNodeInfoReader(deadNodesJson);

hdfsSummary.setLiveDataNodeInfos(liveNodes);

hdfsSummary.setDeadDataNodeInfos(deadNodes);

String fsNameSystemStateUrl = String

.format(jmxServerUrlFormat, hadoopJmxServerUrl,

fsNameSystemState);

MonitorMetrics hadoopMetrics = client

.get(MonitorMetrics.class, fsNameSystemStateUrl, null, null);

hdfsSummary.setNumLiveDataNodes((int) hadoopMetrics.getMetricsValue("NumLiveDataNodes"));

hdfsSummary.setNumDeadDataNodes((int) hadoopMetrics.getMetricsValue("NumDeadDataNodes"));

hdfsSummary

.setVolumeFailuresTotal((int) hadoopMetrics.getMetricsValue("VolumeFailuresTotal"));

return hdfsSummary;

}

public static List<DataNodeInfo> dataNodeInfoReader(String jsonData) throws IOException {

List<DataNodeInfo> dataNodeInfos = new ArrayList<DataNodeInfo>();

Map<String, Object> nodes = JsonUtil.fromJsonMap(String.class, Object.class, jsonData);

for (Entry<String, Object> node : nodes.entrySet()) {

Map<String, Object> info = (HashMap<String, Object>) node.getValue();

String nodeName = node.getKey().split(":")[0];

DataNodeInfo dataNodeInfo = new DataNodeInfo();

dataNodeInfo.setNodeName(nodeName);

dataNodeInfo.setNodeAddr(info.get("infoAddr").toString().split(":")[0]);

dataNodeInfo.setLastContact((int) info.get("lastContact"));

dataNodeInfo.setUsedSpace(doubleFormat(info.get("usedSpace"), gbLength));

dataNodeInfo.setAdminState(info.get("adminState").toString());

dataNodeInfo

.setNonDfsUsedSpace(doubleFormat(info.get("nonDfsUsedSpace"), gbLength));

dataNodeInfo.setCapacity(doubleFormat(info.get("capacity"), gbLength));

dataNodeInfo.setNumBlocks((int) info.get("numBlocks"));

dataNodeInfo.setRemaining(doubleFormat(info.get("remaining"), gbLength));

dataNodeInfo

.setBlockPoolUsed(doubleFormat(info.get("blockPoolUsed"), gbLength));

dataNodeInfo.setBlockPoolUsedPerent(doubleFormat(info.get("blockPoolUsedPercent")));

dataNodeInfos.add(dataNodeInfo);

}

return dataNodeInfos;

}

public static DecimalFormat df = new DecimalFormat("#.##");

public static double doubleFormat(Object num, long unit) {

double result = Double.parseDouble(String.valueOf(num)) / unit;

return Double.parseDouble(df.format(result));

}

public static double doubleFormat(Object num) {

double result = Double.parseDouble(String.valueOf(num));

return Double.parseDouble(df.format(result));

}

}

测试类。

package com.simoniu.hbase.hbasemonitor.app;

import com.simoniu.hbase.hbasemonitor.util.HBaseUtil;

import com.simoniu.hbase.hbasemonitor.util.HadoopUtil;

import com.simoniu.hbase.hbasemonitor.util.StatefulHttpClient;

import java.io.IOException;

/**

* Created by jixin on 18-3-24.

*/

public class MonitorApp {

public static void main(String[] args) throws IOException {

StatefulHttpClient client = new StatefulHttpClient(null);

HadoopUtil.getHdfsSummary(client).printInfo();

}

}

运行效果:

HDFS SUMMARY INFO

totalBlocks:40

totalFiles:130

numLiveDataNodes:1

nodeName:hadoop-master

numBlocks:38

----------------------